It’s mid-2017 and the technologies surrounding the Internet of Things are reaching their Gartner Hype Cycle expectation peaks. While the more sensational IoT visions of salt shakers conspiring with smart refrigerators will dim, cloud-connected embedded devices will continue their decades-long perfusion into our environment. The power and utility of embedded device nodes acting as agents for powerful cloud software will become a fundamental force in consumer and industrial applications.

The development processes for embedded firmware and cloud applications have taken largely divergent paths. Embedded development has stayed close to the metal, focusing on coping with extremely limited computing resources. Cloud development has raced toward frameworks and abstractions that eliminate the individual hardware nodes as a developer concern and try to enable transparent access to computing resources limited only by budget. With an inevitable future of embedded and cloud software working together in increasingly complex ways, how will current software development techniques hold up?

Scurrying Through the Brush

Embedded devices are fun—they’re where our software encounters the real world, sensing, reacting, making things happen. Bringing an embedded product successfully to production involves meeting a lot of challenges increasingly unique to embedded systems: Low-level languages and tools; fixed price, storage, communication, and power budgets—a different universe from evolving virtualized cloud environments; real-time response requirements—most mainstream developers have ever had to deal with hard real-time systems; high software update risk—a rigorous Agile cloud app might release to production weekly, daily, continuously(!), but for embedded devices in the field a firmware update is still a hold-your-breath endeavor.

For most of software history, an embedded device meant the software was considered as immutable as the hardware—once it rolled off the assembly line, a software fix meant a technician visit or a recall. Embedded software development was closely coupled with embedded hardware development, with the same conservatism and rigor expected as for making physical parts. While consumer-oriented software became easier to develop, debug, revise, and update, embedded software continued to require deep hardware knowledge and sophisticated debugging skills. The common meaning of embedded has widened since then, but while users still expect embedded devices to be simple, responsive, and essentially bug-free in operation, there is very low tolerance for systems that cannot be field-updated. Modern devices are expected to be connected, updated with big-fixes and features, and secure against exploits—now and in the future.

When cost, size, and power consumption are dominant design factors, embedded designs will continue to trade off powerful, developer-friendly chips in favor of low-resource, low-power chips that offer smaller, cheaper, longer-lasting (battery-wise) products for end-users. Given these continuing requirements for efficient, close-to-the-metal software implementations, the modern rich-OS-based, deep framework stacks that have made phone and cloud apps much easier to develop and scale are simply not feasible to use for efficient embedded implementations.

Ground Cover

Adding to the disparate development environments of embedded and cloud components is the recent emergence of a fog layer of network-local, medium-powered hubs that can concentrate, offload, and manage edge devices without requiring a round-trip to the cloud. Local controller hubs are not new to industrial designers, but this capability will increasingly be available to all IoT designers—and increasingly required to meet product performance goals.

The future of fog computing is looking like it will involve shared operating environments with relatively limited resources. Whether using a shared fog-as-service environment or controlling your own local gateway device, fog development tools and capabilities will probably be like neither your cloud environment nor your embedded environment.

View from the Tree-Tops

In the realm of cloud-targeted application development, resources and frameworks are becoming ever more abstract: Bare metal machines gave way to virtual machines, which in turn are giving way to containers; software components are built to communicate via lightweight APIs and message-buses that eliminate developer considerations of where or how many of a component is deployed. In this space the concept of fixed storage, finite compute cycles, hardware failures, and all the concerns of deploying to physical computers are abstracted away. Developers are allowed to think purely in terms of data flows through the application, communication over invisibly fast network meshes, storage in limitless reliable data pools.

With distributed heterogeneous designs, large amounts of development effort are still spent simply getting all the different components—and the teams developing the components—communicating with each other. The embedded part of the project often spends most of its time simply getting the software to come up on the hardware within resource constraints and without crashing while the cloud developers are adding and deploying new features on a weekly basis.

Soaring Above

Whether a lone designer building something for herself or an organization using a human-centered design process, the most effective products are conceived and designed from the perspective of an end-user. The more time software developers can spend working on business logic and user experience, the closer the end result will be to the original user-centric vision.

So how do we get full-stack IoT development—heterogeneous embedded, fog, and cloud—to a place where a singular product vision can be implemented as a singular software development effort?

Arch

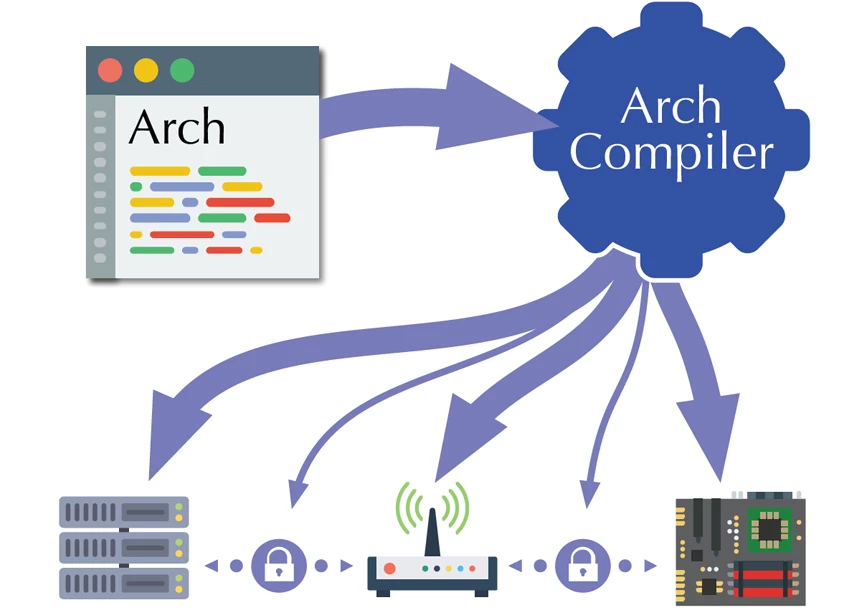

Arch is a language, development environment, and runtime system that considers the heterogeneous targets of an IoT product as a single, coherent whole. Think of Arch as a virtual operating system—developers work on a single piece of application code in a domain-optimized language while the actual heterogeneous targets are abstracted away.

The Arch vision is to provide an abstraction layer over embedded microcontrollers, secure communication protocols, fog near-edge resources, and cloud data processing. The Arch language, framework libraries, and runtime system allow an application developer to rise above low-level architecture concerns and focus on data flow and business logic, while the build and deploy system partitions the program into target-specific components and synthesizes secure communications channels between them.

With Arch coordinating build, test, and deployment of all nodes in an IoT application universe, an developers can adopt an Agile approach to their entire IoT application, ensuring reliable, deterministic control of security patches and feature updates.

Design Philosophy

Given sufficiently expressive abstractions, high-level programs can be rendered into efficient, effective run-time code spanning the entire landscape of IoT hardware targets. When writing code to implement an IoT product vision, developers should have tools that offer a programming paradigm aligned with the problem space of connected, distributed, event-driven, streaming, physically-based products.

- Some very useful abstractions are gaining interest in the cloud space, but few have achieved popularity in the embedded space where they could arguably be the most valuable:

- Message Passing Primitives support at a low level allows standard libraries and user code to implement safe concurrency and asynchronicity that allow the compiler wide options for optimization.

- Reactive Streams are an ideal way to model the event- and data-driven workflows encountered in IoT applications, as well as the laggy, asynchronous communication environments that connected, embedded devices usually deal with.

- Hierarchical State Machines can efficiently and safely represent the state-based logic required by physical computing use cases and protocol codecs.

Rich Primitive Datatypes can ensure safety and efficiency of data operations across all targets, including common MCU types like bit fields, fixed-point, read-only, write-only, and range-constrained or other usage-constrained types.

Traditional approaches to compiler-based code optimization involve analysis of low-level code to deduce what was intended by the developer. By providing domain-appropriate high-level abstractions, a software development environment can enable a compiler to make powerful, large-scale optimizations due to having explicit understanding the software’s intent. By offering low-level support for language constructs suited to the distributed physical computing domain, Arch gives the compiler and run-time system tremendous latitude for programmatically analyzing, rearranging, and optimizing code across target architectures and communication channels.

The View Ahead

Most people who hear about the Arch vision are convinced that it would be fantastic—if it can be built. If you’re in that group, follow our blog for future developments. If you’re excited about this concept, leave a comment. If you think we’re crazy, let us know; tell us what we’re missing. We’re talking to silicon manufacturers, open source communities, embedded developers, cloud developers… all stakeholders in the IoT / distributed-embedded-connected development landscape. And we’d like to talk to you.

Leave A Comment